The tool, called Nightshade, scrambles training data in ways that can cause serious damage to image-generating AI models.

A new tool lets artists add invisible tweaks to their art before uploading pixels online, so that if it’s scraped through an AI training set, the resulting model breaks up in messy and unpredictable ways.

The tool, called Nightshade, is a way to fight back against AI companies that use artists’ work to train their models without the creator’s permission. Using this training data to „poison” could damage future iterations of image-generating AI models like DALL-E, Midjourney, and Stable Diffusion, rendering some of their outputs useless—dogs become cats, cars become cows. Forward MIT Tech Review received an exclusive Overview of the studyIt was submitted for peer review at the Usenix Computer Security Conference.

AI companies such as OpenAI, Meta, Google, and Stability AI are facing numerous lawsuits from artists who claim their copyrighted material and personal information has been removed without consent or compensation. Professor Ben Zhao of the University of Chicago, who led the team that developed Nightshade, hopes it will help AI companies return the balance of power to artists by creating a powerful deterrent against artists’ copyrights and intellectual disrespect. Property. Meta, Google, Stability AI and OpenAI did not respond to MIT Technology Review’s request for comment.

Don’t settle half the story.

Get free access to technology news here and now.

Subscribe now

Already a subscriber? Sign in

Zhao’s team also grew Glazing, a tool that allows artists to „mask” their own style to prevent being scraped by AI companies. It works in a similar way to Nightshade: by altering the pixels of images in subtle ways that are invisible to the human eye, but manipulates machine learning models to interpret the image as something different from what it actually shows.

The team intends to integrate Nightshade into Glaze, and artists can choose whether or not to use the data poisoning tool. The team is making Nightshade open source, allowing others to tinker with it and create their own versions. The more people use it and create their own versions, the more powerful the tool becomes, Zhao says. Data sets for large AI models can contain billions of images, so the more poisoned images can be scraped into the model, the more damage the technique can do.

A targeted attack

Nightshade exploits a security vulnerability in the AI models it creates that arises from being trained on large amounts of data — in this case, images collected from the Internet. Nightshade confounds those images.

Artists who want to upload their work online, but don’t want their images scraped by AI companies, can choose to upload them to Glaze and cover it with an art style different from their own. Then they can choose to use the nightshade as well. As AI developers scour the web to get more data to modify an existing AI model or create a new one, these toxic models can enter the model’s data set and crash it.

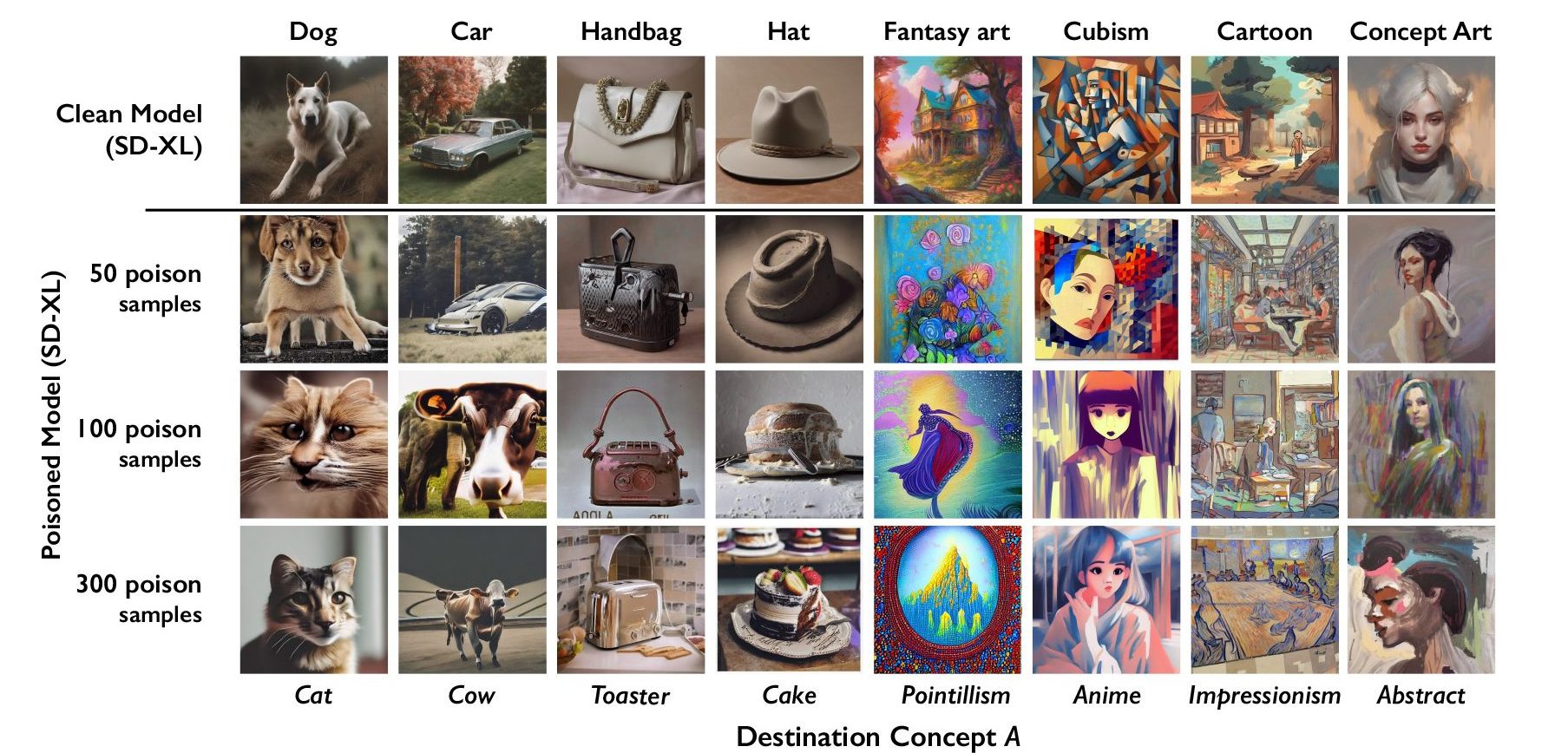

Poisonous data models can be manipulated in pattern learning, for example, images of hats are cakes and images of handbags are toasters. Removing toxic data is extremely difficult because technology companies must painstakingly find and delete each corrupted sample.

The researchers tested the attack on recent models of stable diffusion and trained themselves anew on an AI model. When they fed 50 poisoned images of dogs to Stable Diffusion, and then induced them to create images of dogs, the output began to look different — creatures with more legs and cartoonish faces. With 300 poisoned samples, the attacker can manipulate the standard spread to make images of dogs look like cats.

Courtesy of researchers

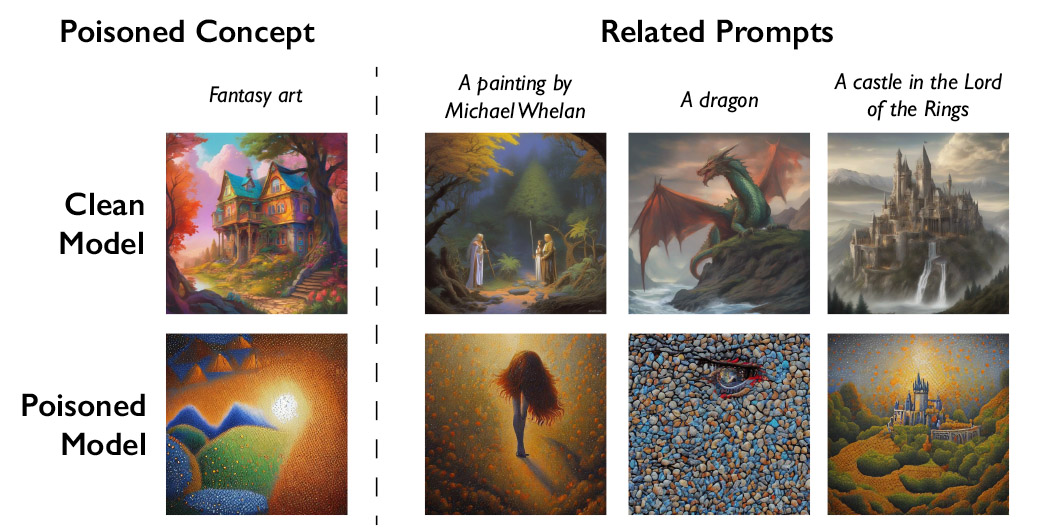

Generative AI models are good at making connections between words, which helps spread the venom. Nightshade affects not only the word „dog” but all similar concepts such as „puppy,” „husky” and „wolf.” Poison Attack also works on tangent related images. For example, if the model cleared a poisoned image for „fantasy,” it would ask for „dragon” and „inside a castle.” lord of the rings” Something else will be handled similarly.

Courtesy of researchers

Zhao acknowledges that there is a risk that people could misuse the data poisoning technique for malicious applications. However, having been trained on billions of data samples, he says, an attacker would need thousands of poisoned samples to do real damage to larger, more powerful models.

„We don’t yet know about strong defenses against these attacks. We haven’t seen modern poisoning attacks yet [machine learning] There are models in the wild, but it may be a matter of time,” says Vitaly Shmatikov, a professor at Cornell University who studies AI model preservation and is not involved in the research. „The time to work on defenses is now,” Shmatikov adds.

Gautam Kamat, an assistant professor at the University of Waterloo who researches data privacy and robustness in AI models and is not involved in the study, says the work is „fantastic.”

„The vulnerabilities won’t magically go away with these new models and will actually be more serious,” says Kamath. „This is especially true as these models become more powerful and people put more faith in them, because the stakes will only rise over time.”

A powerful deterrent

Junfeng Yang, a computer science professor at Columbia University who studies the security of deep learning systems and is not involved in the work, says Nightshade could have a bigger impact if AI companies are more respectful of artists’ rights. , with a greater willingness to pay royalties.

AI companies that have created buildable text-to-image models, such as Stability AI and OpenAI, have offered to let artists avoid using their images to train future versions of the models. But this is not enough, say artists. Eva Durenant, an illustrator and artist who has used Glaze, says opt-out policies require artists to jump through hoops and leave tech companies in full force.

Toorenent hopes Nightshade will change the status quo.

“It’s going to build [AI companies] Think twice because they have the potential to destroy their entire model by taking our work without our consent,” he says.

Another artist, Autumn Beverly, says tools like Nightshade and Glaze have given her the confidence to publish her work online again. After discovering that it had been scraped without his permission on the popular LAION image database, he removed it from the internet.

„I’m so grateful that we have a tool that helps artists put the power back into their own work,” he says.